Ramadan Social Media Sentiment Analysis in Morocco

DATAFTOUR INITIATIVE BY MDS COMMUNITY

Introduction

In the vibrant landscape of data science and community-driven initiatives, the MDS community stands as a beacon of innovation and collaboration. Founded by Bahae Eddine Halim , the MDS community has brought together a diverse group of passionate individuals, united by their shared commitment to leveraging data science for societal impact.

In this article, we delve into one of the latest endeavors spearheaded by the MDS community, a groundbreaking project aimed at analyzing Moroccan online discourse during Ramadan. With the collective efforts of Bahae Eddine Halim ,Loubna Bouljadiane , Soufiane sejjari ,Zineb El houz ,Ali ,Hiba Lbazry, and Imad Nasri , this project embarks on a journey to uncover insights from the vast sea of Moroccan Darija comments and tweets.

At its core, the main objective of this project is to harness the power of data science, machine learning, and deep learning to decode the sentiments, topics, and engagement patterns prevalent in Moroccan online conversations during the sacred month of Ramadan. Through meticulous analysis and cutting-edge methodologies, we aim to shed light on the nuanced perspectives and cultural dynamics shaping Moroccan society in the digital age.

Explore a preview of our project : https://moroccansentimentsanalysis.com

Explore the world of Ramadan comments in Moroccan Darija through EDA, uncovering patterns, trends, and insights from social media platforms like Facebook, Twitter, Hespress, and YouTube. Discover the prevalence of religious themes, temporal engagement patterns, language distribution, topic modeling results, and comment length analysis across different platforms. Gain valuable insights into online discourse during Ramadan and the significance of EDA in extracting meaningful insights from diverse datasets.

Data Scraping

As we know in the world of AI the most important thing is the data , so in fact having lots of data is like having a big toolbox - the more tools you have , the easier it is to fix things.

So before that we dive into how we scrap our data from different sources like YouTube, Twitter(X) ... let's understand scraping.

What is the scraping ?

" Scraping is like gathering ingredients for a recipe, but from websites instead of the grocery store " .

Scraping is simply the process of extracting data from websites. It allows us to collect information for various purposes such as research or building datasets for analysis. In the context of our project, we're specifically interested in scraping comments written in Darija (Moroccan language) from different sources.

How do we get data from the websites ?

There are several methods to perform web scraping, each with its own advantages and limitations. Here are some common methods along with brief explanations:

Manual Scraping: This involves manually copying and pasting data from websites into a local file or database. While simple and straightforward, it is not practical for scraping large amounts of data and is highly inefficient.

Beautiful Soup: Beautiful Soup is a Python library designed for quick and easy scraping of web pages. It provides a simple API for navigating and searching the HTML structure of a webpage, making it ideal for extracting specific information from websites.

Selenium: Selenium is another popular tool for web scraping, particularly useful for scraping dynamic content generated by JavaScript. It allows automation of web browsers to interact with web pages, enabling scraping of content that is rendered dynamically.

API Scraping: Many websites offer APIs (Application Programming Interfaces) that allow developers to access their data in a structured and legal manner. By interacting with these APIs, developers can retrieve data without having to parse HTML or deal with web scraping complexities.

Now, let's relate these methods to our project:

From Hespress :

- We employed a combination of Beautiful Soup and Selenium techniques to extract data from the HESPRESS website. The data we scraped includes the article's title, published date, and comments associated with the article.

the process :

Importing Libraries:

The

webdrivermodule from Selenium serves a pivotal role in automating interactions with web browsers, enabling developers to programmatically navigate and interact with web pages. This powerful tool empowers users to simulate user actions such as clicking buttons, filling out forms...On the other hand,

BeautifulSoup, imported from thebs4library, provides a versatile and user-friendly solution for parsing and extracting data from HTML and XML files.import os import time from selenium import webdriver from bs4 import BeautifulSoup

Web Scraping Process:

The process begins by creating a

webdriver.Chromeinstance, which specifies the path to the Chrome WebDriver executable (chromedriver). This instance acts as a bridge between the Python script and the Chrome browser, enabling automated interactions with web pages.Next, the browser is directed to the specified URL using the

driver.get(url)command.To ensure that the page is fully loaded before proceeding with further actions, a delay of 3 seconds is introduced using

time.sleep(3).Once the page is fully loaded, the HTML source code of the webpage is retrieved using

driver.page_source.Finally, the retrieved HTML source code is passed to BeautifulSoup for parsing and analysis. Using the

BeautifulSoup(src, 'lxml')syntax,driver = webdriver.Chrome('C:/Users/sejja/chromedriver') # Assuming valid path driver.get(url) time.sleep(3) src = driver.page_source soup = BeautifulSoup(src, 'lxml')Various elements of interest, such as the title, date, tags, and comments, are extracted from the parsed HTML using BeautifulSoup's

findandfindAllmethods. Here is an example how we extract title and the comments :titre = soup.find('h1', {'class': 'post-title'}) if titre: titre = titre.get_text().strip() else: titre = "not available" comments_area = soup.find('ul', {'class': 'comment-list hide-comments'}) comments = [] if comments_area: for comment in comments_area.findAll('li', {'class': 'comment even thread-even depth-1 not-reply'}): comment_date = comment.find('div', {'class': 'comment-date'}) comment_content = comment.find('div', {'class': 'comment-text'}) comment_react = comment.find('span', {'class': 'comment-recat-number'}) if comment_date and comment_content and comment_react: comments.append({ "comment_date": comment_date.get_text(), "comment_content": comment_content.get_text(), "comment_react": comment_react.get_text() }) return {'Date': date, 'Titre': titre, 'Tags': tags, 'Comments': comments}

And finally extracted data is stored in a dictionary format.

return {'Date': date, 'Titre': titre, 'Tags': tags, 'Comments': comments}

Cleanup:

driver.quit()ensures that the WebDriver browser instance is closed, even if an exception occurs, preventing resource leaks.

From YouTube :

YouTube API: The YouTube API provides developers with programmatic access to YouTube's features, including retrieving video metadata, comments, and other relevant data.

The code first fetches details about the specified video, including the channel name and video title. Then, it retrieves comments associated with the video using the 'commentThreads' endpoint. To handle pagination, it iterates through multiple pages of comments, ensuring that all comments are captured.

The extracted data includes essential information such as the video title, channel name, comment date, content, likes, dislikes, author, and number of replies.

the process :

Get the YouTube API :

To obtain your YouTube Data API key, you need to follow these steps:

1. Sign in to Google:

- Go to the Google Developers Console at console.developers.google.com

- Sign in with your Google account. If you don't have one, you'll need to create it.

2. Create a new project:

- If you don't have any existing projects, you'll be prompted to create one. Click on the "Select a project" dropdown menu at the top and then click on the "New Project" button.

- Enter a name for your project and click on the "Create" button.

3. Enable the YouTube Data API:

- In the Google Cloud Console, navigate to the "APIs & Services" > "Library" page using the menu on the left.

- Search for "YouTube Data API" in the search bar.

- Click on the "YouTube Data API v3" result.

- Click on the "Enable" button.

4. Create credentials:

- After enabling the API, navigate to the "APIs & Services" > "Credentials" page using the menu on the left.

- Click on the "Create credentials" button and select "API key" from the dropdown menu.

- Your API key will be created. Copy it and securely store it.

5. Use your API key:

- Now that you have your API key, you can use it in your applications to access the YouTube Data API.

from googleapiclient.discovery import build

import pandas as pd

# YouTube Data API key

API_KEY = 'your YouTube API key'

- build the YouTube service object :

# Build the YouTube service object . It requires specifying the API name ('youtube'), API version ('v3'), and developer API key (API_KEY) obtained from the Google Developer Console.

youtube_service = build('youtube', 'v3', developerKey=API_KEY)

- retrieve video details

# Retrieve video details using the 'videos' endpoint

video_response = youtube_service.videos().list(

part='snippet',

id=video_id

).execute()

- Extract the essential information such as the video title, channel name, comments :

here we extracted channel name and video title from the video_response :

# Extract channel name and video title

channel_name = video_response['items'][0]['snippet']['channelTitle']

video_title = video_response['items'][0]['snippet']['title']

and here we got the comments plus some details such as the comment's date, numbers of likes , the author ... : (including the pagination)

response = youtube_service.commentThreads().list(

part='snippet,replies',

videoId=video_id,

textFormat='plainText',

maxResults=800, # Increase the max results per page if necessary

pageToken=nextPageToken

).execute()

if not response['items']:

print(f"Comments are disabled for video: {video_id}")

return []

for item in response['items']:

snippet = item['snippet']['topLevelComment']['snippet']

comments.append({

'Video Title': video_title,

'Channel Name': channel_name,

'Comment Date': snippet.get('publishedAt', ''),

'Comment': snippet.get('textDisplay', ''),

'Likes': snippet.get('likeCount', 0),

'Dislikes': snippet.get('dislikeCount', 0),

'Author': snippet.get('authorDisplayName', ''),

'Replies': item['snippet']['totalReplyCount']

})

- Search for YouTube videos based on a query string:

So we added a function to allow us to search for YouTube videos based on a query string, with optional filters for region (for us we used MA indicating the Moroccan region) and publication dates (matching with Ramadan month). It fetches video IDs matching the search criteria, which can be further used to retrieve additional details or perform other operations on the videos.

For Twitter :

- Twscrape : It is a tool for scraping data from tweets. It collects data such as user profiles, follower lists and follower lists, likes and retweets, as well as keyword searches.

The extracted data includes essential information such as the username, content and comment date.

The process :

Fetching Tweets: Utilizing an asynchronous function gather_tweets

()to retrieve tweets from an asynchronous Twitter scraper. It's similar to YouTube , we used a query string to retrieve the tweets related to Ramadan month. And we need also information about twitter (X) account (username, password ..)import asyncio import twscrape from twscrape import API, gather import pandas as pd class TwitterScraper: def __init__(self): self.api = API() async def gather_tweets(self, query="رمضان", limit=20): await self.api.pool.add_account("username dial twitter", "password", "email","mail_pass") #mail pass optionel , we can have more than 1 await self.api.pool.login_all() tweets = await gather(self.api.search(query, limit=limit)) data = [] for tweet in tweets: tweet_data = { 'ID': tweet.id, 'Username': tweet.user.username, 'Content': tweet.rawContent, 'Date': tweet.date } data.append(tweet_data) print(tweet.id, tweet.user.username, tweet.rawContent) df = pd.DataFrame(data) return dfDisplaying Tweets: Tweets are displayed on the screen during the retrieval process.

Saving Tweets: Tweet data is saved in either a CSV or JSON file, depending on your choice.

For Facebook :

- As we marked before for Hespress website, we also used for Facebook a combination of Beautiful Soup and Selenium techniques to extract data from it. The data we scraped includes the article's title, published date, and comments associated with the article.

the process :

Import necessary libraries:

from bs4 import BeautifulSoup from selenium import webdriver from selenium.common.exceptions import NoSuchElementException, TimeoutException from selenium.webdriver.common.by import By import time import pandas as pdGet the posts from a Facebook page :

Define the function

get_facebook_post_datato get posts from a Facebook page. Inside this function we initialize a Chrome WebDriver assuming the path tochromedriveris valid. Navigate to the provided Facebook page URL. Click the "See More Posts" button if present. Scroll down to load more posts based on thescroll_count. Use BeautifulSoup to parse the page source. Extract post data (title, link, date, reactions, comments) using specified class names. And finally construct a DataFrame from the extracted data and returns it.Usage example:

series = get_facebook_post_data('https://web.facebook.com/alwa3d4', scroll_count=80)Use

facebook_scraperlibrary to extract data from Facebook posts :Import required libraries:

import facebook_scraper as fs import pandas as pd from facebook_scraper import exceptions # Import specific exceptionsDefine the

FacebookScraperclass:Constructor that Initializes the maximum number of comments to retrieve (

MAX_COMMENTS).We have also the Method

getPostDatathat have the parameterpost_urlURL of the Facebook post. This function extracts the post ID from the provided URL, attempts to retrieve post data usingfacebook_scraper, handles potential errors such as missing comments or invalid URLs. And if comments are found, normalizes the JSON data into a DataFrame.post_id = post_url.split("/")[-1].split("?")[0] # Extract post ID print(post_id) # Attempt to get post data, handling potential errors gen = fs.get_posts(post_urls=[post_id], options={"comments": self.MAX_COMMENTS, "progress": True}) post = next(gen) # Handle missing 'comments_full' key comments = post.get('comments_full', []) # Use default empty list if missing if comments: df = pd.json_normalize(comments, sep='_') return df else: print(f"No comments found for post: {post_id}") return None # Return None to indicate no comments

In conclusion, data scraping is a crucial process in collecting data from various sources like websites and social media platforms. By utilizing tools like Beautiful Soup, Selenium, and APIs, developers can efficiently gather and analyze data for research and analysis purposes. The ability to extract valuable information from websites, YouTube, Twitter, and Facebook opens up opportunities for AI projects to utilize data effectively.

Data Cleaning

After successfully scraping data from various sources such as Hespress, YouTube, Twitter, and Facebook, the next crucial step is cleaning this diverse dataset. Each source brings its own set of challenges and characteristics, making data cleaning essential to ensure accuracy and reliability in subsequent analysis .In our cleaning process we used various techniques to get the data ready for analysis.

Hespress Dataset

Data structure : The Hespress dataset comprises columns including Date, Titre, Tags, and Comments. The Date column records the date and time of comment posting, presented in Arabic format (e.g., "الجمعة 29 مارس 2024 - 18:00"). Titre represents the title or headline of the corresponding content. Tags denote keywords or labels associated with the content. Comments, depicted as a string format dictionary, encapsulate comment-related data, including comment_date, comment_content, and comment_react. Each comment_date entry denotes the timestamp of comment posting, while comment_content encapsulates the textual content of the comment. Lastly, comment_react records reactions or feedback received on the comment

import pandas as pd

hes_data1 = pd.read_csv("/content/drive/MyDrive/DataSets sentiment analysis/hespressComments.csv")

hes_data2 = pd.read_csv("/content/drive/MyDrive/DataSets sentiment analysis/hespressComments2.csv")

We focused solely on two variables: Date and Comments

hes_data2 = hes_data2[['Date','Comments']]

hes_data1 = hes_data1[['Date','Comments']]

Data cleaning :

the Comments variable, initially presented as a string format containing a list of dictionaries, encapsulates essential comment-related data, including comment_date, comment_content, and comment_react. To facilitate further analysis, a crucial step involved converting this string format into a list of dictionaries.

import ast

def convert_str_to_list_of_dicts(input_str):

try:

result_list = ast.literal_eval(input_str)

if isinstance(result_list, list):

if all(isinstance(item, dict) for item in result_list):

return result_list

except (SyntaxError, ValueError):

pass

return []

hes_data1['Comments'] = hes_data1['Comments'].apply(convert_str_to_list_of_dicts)

hes_data2['Comments'] = hes_data2['Comments'].apply(convert_str_to_list_of_dicts)

hes_data = pd.concat([hes_data1,hes_data2])

In addition to converting the Comments variable from a string format containing a list of dictionaries to an actual list of dictionaries, another crucial step involved data validation. Some comments were found to contain empty lists, which could potentially skew the analysis. Therefore, I implemented a step to identify and remove these empty lists from the dataset. This data validation process ensured the integrity and reliability of the dataset for subsequent sentiment analysis

hes_data = hes_data[hes_data['Comments'].apply(lambda x: len(x) > 0)]

hes_data.reset_index(drop=True, inplace=True)

We transformed the Comments variable from a list of dictionaries into a structured Data Frame. This involved organizing each comment's date and content into a single Data Frame for streamlined analysis. By leveraging Python's Pandas library. This transformation facilitated easier access and interpretation of the comment data, laying the groundwork for subsequent sentiment analysis.

comments_list = []

for i, comment_data in enumerate(hes_data['Comments']):

for comment_dict in comment_data:

comment_date = comment_dict['comment_date'].strip()

comment_content = comment_dict['comment_content'].strip()

comments_list.append({'Date': comment_date, 'Comment': comment_content})

hes_data_final = pd.DataFrame(comments_list)

The next step in the data cleaning process involved transforming the date format from its original Arabic format (e.g., 'الجمعة 29 مارس 2024 - 18:00') to a standardized format ('YYYY-MM-DD HH:MM:SS'). This conversion ensured consistency and compatibility with common date-time formats, facilitating easier manipulation and analysis of the data. By implementing this transformation, the dataset's date information was brought into a uniform structure, ready for further analysis and visualization.

from datetime import datetime

def convert_date(date_str):

parts = date_str.split()

day = int(parts[1])

month = {

'يناير': 1, 'فبراير': 2, 'مارس': 3, 'أبريل': 4, 'ماي': 5, 'يونيو': 6,

'يوليوز': 7, 'غشت': 8, 'شتنبر': 9, 'أكتوبر': 10, 'نونبر': 11, 'دجنبر': 12

}[parts[2]]

year = int(parts[3])

time = parts[-1]

date_time = datetime(year, month, day)

time_parts = time.split(':')

date_time = date_time.replace(hour=int(time_parts[0]), minute=int(time_parts[1]))

return date_time

hes_data_final['Date'] = comments_df['Date'].apply(convert_date)

In addition to the transformation of the date format, I added a new column named 'source' to the Data Frame and assigned it the value 'Hespress' for each row. This step allowed for easy identification and categorization of the data based on its source. By including this metadata, the dataset became more informative and well-organized, facilitating subsequent analysis and interpretation.

hes_data_final = hes_data_final.assign(source = 'Hespress')

To enhance the dataset's comprehensiveness, the final step involved detecting the language of each comment. Utilizing the 'langdetect' library, we automatically identified the language of the comment text. This process enabled us to distinguish between comments in different languages, ensuring that subsequent analysis accurately reflected the linguistic diversity of the dataset. By incorporating language detection, we enriched the dataset with valuable metadata, facilitating more nuanced insights and interpretation

pip install langdetect

from langdetect import detect, DetectorFactory

DetectorFactory.seed = 0

def detect_language(comment):

try:

lang = detect(comment)

return lang

except:

return None

hes_data_final['Language'] = hes_data_final['Comment'].apply(detect_language)

YouTube Dataset

**Data structure :**The YouTube dataset consists of various columns, each containing specific information related to YouTube videos and comments. These columns include the video title, channel name, comment date, comment content, number of likes, number of dislikes, author name, and number of replies. Each row represents a comment posted on a YouTube video, with details such as the video's title, the channel it belongs to, the date and time the comment was posted, the content of the comment itself, the number of likes and dislikes received, the author's username, and the number of replies the comment has generated

youtube_datasets_all = []

youtube_dataset1 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (1).csv")

youtube_datasets_all.append(youtube_dataset1)

youtube_dataset2 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (2).csv")

youtube_datasets_all.append(youtube_dataset2)

youtube_dataset3 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (3).csv")

youtube_datasets_all.append(youtube_dataset3)

youtube_dataset4 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (4).csv")

youtube_datasets_all.append(youtube_dataset4)

youtube_dataset5 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (5).csv")

youtube_datasets_all.append(youtube_dataset5)

youtube_dataset6 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (6).csv")

youtube_datasets_all.append(youtube_dataset6)

youtube_dataset7 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (7).csv")

youtube_datasets_all.append(youtube_dataset7)

youtube_dataset8 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (8).csv")

youtube_datasets_all.append(youtube_dataset8)

youtube_dataset9 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (9).csv")

youtube_datasets_all.append(youtube_dataset9)

youtube_dataset10 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (10).csv")

youtube_datasets_all.append(youtube_dataset10)

youtube_dataset11 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (11).csv")

youtube_datasets_all.append(youtube_dataset11)

youtube_dataset12 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (12).csv")

youtube_datasets_all.append(youtube_dataset12)

youtube_dataset13 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (13).csv")

youtube_datasets_all.append(youtube_dataset13)

youtube_dataset14 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube (14).csv")

youtube_datasets_all.append(youtube_dataset14)

youtube_dataset15 = pd.read_csv("/content/drive/MyDrive/Datasets_Youtube/ramadan_morocco_comments_youtube.csv")

youtube_datasets_all.append(youtube_dataset15)

df_youtube = pd.concat(youtube_datasets_all, ignore_index=True)

Data cleaning : In the initial phase of cleaning the YouTube dataset, we began by addressing missing values and duplicate rows. Missing values, if left unhandled, can introduce bias and inaccuracies into our analysis. Therefore, we meticulously examined the dataset for any missing information and removed rows where essential data was absent. Additionally, we identified and eliminated duplicate rows to ensure the integrity and reliability of our dataset

df_youtube = df_youtube.dropna()

df_youtube = df_youtube.drop_duplicates()

After handling missing values and duplicates, we cleaned links and special characters from comments using regular expressions. This step aimed to enhance the quality of textual data by removing noise and irrelevant information, ensuring a clean and focused dataset for analysis.

import re

def clean_comment(comment):

# Regular expression pattern to match URLs

url_pattern = re.compile(r'http[s]?://(?:[a-zA-Z]|[0-9]|[$-_@.&+]|[!*\\(\\),]|(?:%[0-9a-fA-F][0-9a-fA-F]))+')

# Replace URLs and special characters with an empty string

cleaned_comment = re.sub(url_pattern, '', comment)

return cleaned_comment

# Apply cleaning to comments

df_youtube['Comment'] = df_youtube['Comment'].apply(clean_comment)

After cleaning links and special characters, we detected the language of comments using the langdetect library. This step allowed us to identify the language of each comment, enhancing our understanding of the dataset's linguistic diversity and enabling more accurate analysis.

from langdetect import detect, DetectorFactory

DetectorFactory.seed = 0

# Create a function to detect the language of a comment

def detect_language(comment):

try:

lang = detect(comment)

return lang

except:

return None

# Apply language detection to each comment and create a new column for language

df_youtube['Language'] = df_youtube['Comment'].apply(detect_language)

Drop rows with None values in the 'Language' column

df_youtube.dropna(subset=['Language'], inplace=True)

# Drop comments in English, French, and Spanish

df_youtube = df_youtube[(df_youtube['Language'] != 'en') & (df_youtube['Language'] != 'fr') & (df_youtube['Language'] != 'es') & (df_youtube['Language'] != 'ro') & (df_youtube['Language'] != 'cy') & (df_youtube['Language'] != 'no') & (df_youtube['Language'] != 'de') & (df_youtube['Language'] != 'et')& (df_youtube['Language'] != 'si')& (df_youtube['Language'] != 'af')& (df_youtube['Language'] != 'fi')& (df_youtube['Language'] != 'pt')& (df_youtube['Language'] != 'pl')& (df_youtube['Language'] != 'vi')& (df_youtube['Language'] != 'sv')& (df_youtube['Language'] != 'ca')& (df_youtube['Language'] != 'ti')& (df_youtube['Language'] != 'lv')& (df_youtube['Language'] != 'nl')& (df_youtube['Language'] != 'cs')& (df_youtube['Language'] != 'pt')& (df_youtube['Language'] != 'th')]

After detecting the language of comments, we added a new column called 'source', assigning the value 'YouTube' to each row

df_youtube['source'] = 'Youtube'

In the final step, we streamlined our dataset by selecting only the essential columns: Comment Date, Comment, Language, and Source. This focused approach ensures that we retain pertinent information for analysis while maintaining dataset clarity and efficiency

columns_to_drop = ['Video Title', 'Channel Name', 'Likes', 'Dislikes', 'Author', 'Replies']

df_youtube.drop(columns=columns_to_drop, inplace=True)

Facebook Dataset

Data structure : The Facebook dataset comprises several columns, including 'comment_text', 'comment_time', and 'Title'. The 'comment_text' column contains the textual content of comments posted on Facebook. The 'comment_time' column records the date and time of each comment's posting, and the 'Title' column likely denotes the title or headline associated with the Facebook post or content. Each row represents a comment posted on Facebook, with corresponding details such as comment text, posting time, and associated title.

facebook_df= pd.read_csv("/content/facebook_comments.csv")

Data cleaning : In the cleaning process, we determine the language of each comment using a language classification function. This step categorizes comments in the Facebook dataset as either Arabic or French based on the presence of specific language characters. It helps organize the data by language, facilitating subsequent analysis and processing tailored to each language category

import re

def classify_language(comment):

"""

Classify the language of a comment as 'ar' (Darija-arabic) or 'fr' (darija-French).

"""

# Regular expressions for Arabic and French characters

arabic_pattern = re.compile(r'[\u0600-\u06FF\u0750-\u077F\u08A0-\u08FF]+') # Arabic Unicode range

english_pattern = re.compile(r'[\x00-\x7F\x80-\xFF]+') # English Unicode range

# Check if the comment contains Arabic or French characters

if arabic_pattern.search(comment):

return 'ar'

elif english_pattern.search(comment):

return 'fr'

else:

return None # Return None if no Arabic or French characters are found

facebook_df['Language'] = facebook_df['comment_text'].apply(classify_language)

In the final step of cleaning, we add a new column labeled 'source' with the value 'Facebook'. Additionally, we streamline the dataset by selecting only the essential columns, excluding the 'Title' column. This focused approach ensures that the dataset retains pertinent information for analysis, enhancing clarity and efficiency.

facebook_df['source'] = 'Facebook'

facebook_df = facebook_df.drop(columns=['Title'])

NLP for data extraction and stop words removal

Machine Learning heavily relies on the quality of the data fed into it, and thus, data preprocessing plays a crucial role in ensuring the accuracy and efficiency of the model.

Text pre-processing is the process of preparing text data so that machines can use the same to perform tasks like analysis, predictions, etc.

There are many different steps in text pre-processing but in this article, we'll delve into the intricacies of preprocessing Arabic text data for sentiment analysis. From importing datasets to cleaning and preparing text for analysis, we'll explore each step in detail.

Importing Datasets:

To kickstart our preprocessing journey, we begin by importing our datasets. We've curated data from various sources including Facebook, Twitter, Hespress, and YouTube.

import pandas as pd

import os

#import the datasets

folder="C:/Users/sejja/Downloads/Compressed/cleaned_data/cleaned_data/"

df_facebook=pd.read_csv(folder+"facebook_clean.csv")

df_twitter=pd.read_csv(folder+"twitter_clean.csv")

df_hespress=pd.read_csv(folder+"hespress_clean.csv")

df_youtube=pd.read_csv(folder+"youtube_clean.csv")

df_facebook.columns,df_twitter.columns,df_hespress.columns,df_youtube.columns

Normalisation:

Normalisation ensures consistency and coherence in our data. Here, we align column names and select relevant columns from each dataset for further processing.

# Rename columns to match

df_twitter = df_twitter.rename(columns={'Content': 'Comment'})

df_twitter = df_twitter.rename(columns={'language': 'Language'})

df_youtube = df_youtube.rename(columns={'Comment Date': 'Date'})

# Select only the desired columns

df_facebook = df_facebook[['Comment', 'Date', 'Language', 'source']]

df_twitter = df_twitter[['Comment', 'Date', 'Language', 'source']]

df_hespress = df_hespress[['Comment', 'Date', 'Language', 'source']]

df_youtube = df_youtube[['Comment', 'Date', 'Language', 'source']]

# Concatenate the dataframes

df_merge = pd.concat([df_facebook, df_twitter, df_hespress, df_youtube], ignore_index=True)

Replacing Null Values:

Handling missing values is crucial for robust analysis. Here, we replace null values in the 'Language' column with 'ar' (Arabic) and drop rows with null values in the 'Date' column.

# Replace null values in the 'Language' column with 'ar'

df_merge['Language'].fillna('ar', inplace=True)

# Drop rows with null values in the 'Date' column

df_merge.dropna(subset=['Date'], inplace=True)

Deleting comments with less than 2 words

To maintain data quality, we filter out comments with less than two words.

df_merge = df_merge[df_merge['Comment'].str.split().str.len() >= 2]

Emoji replacement

In our text processing steps, we understand that emojis are important for showing feelings and emotions. So, we make sure to handle them properly. First, we create a list that matches each emoji with its meaning in words. Then, we create a function that goes through the text and changes each emoji to its word meaning using the list we made. This helps us better understand the text and the emotions it conveys.

# Create dictionary for emoji replacement

emoji_dict = dict(zip(df_emoji['emoji'], df_emoji['text']))

# Function to replace emojis with their meanings

def replace_emoji(text):

for emoji, meaning in emoji_dict.items():

text = text.replace(emoji, meaning)

return text

Saving Data:

We save the preprocessed data for future analysis, including separate datasets for Arabic and French comments, and a merged dataset for comprehensive analysis.

datasetFr = df_merge[df_merge['Language'] == 'fr']

datasetAr = df_merge[df_merge['Language'] == 'ar']

datasetFr.to_csv("dataset_fr.csv")

datasetAr.to_csv("dataset_ar.csv")

df_merge.to_csv('merge_data.csv')

Preprocessing and Cleaning:

Now comes the heart of our preprocessing pipeline. We'll clean the text data by removing non-Arabic words, tokenizing, removing stopwords, and stemming.

So, let’s get started.

Removing non-arabic words

To ensure that only Arabic text remains for analysis, we utilize a regular expression pattern that matches any characters outside the Unicode range for Arabic script (\u0600 to \u06FF). This pattern effectively identifies and removes any non-Arabic characters, preserving the integrity of the text data for further processing.

Removing punctuation:

Punctuation marks, while essential for readability in natural language, often add noise to text analysis tasks. By employing another regular expression pattern targeting non-word characters (\w) and non-whitespace characters (\s), we systematically eliminate all punctuation from the text, streamlining the subsequent tokenization process.

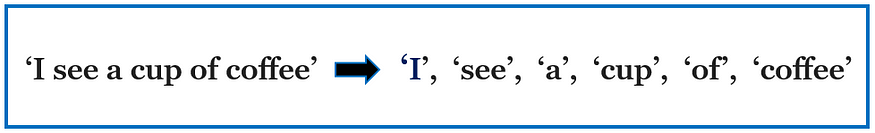

What is tokenization:

Tokenization is the process of breaking down large blocks of text such as paragraphs and sentences into smaller, more manageable units.

What are stop words?

The words which are generally filtered out before processing a natural language are called stop words. These are actually the most common words in any language (like articles, prepositions, pronouns, conjunctions, etc) and does not add much information to the text. Examples of a few stop words in Arabic are

"كل" "لم" "لن" "له" "من" "هو" "هي" .

Why do we remove stop words?

Stop words are available in abundance in any human language. By removing these words, we remove the low-level information from our text in order to give more focus to the important information. In order words, we can say that the removal of such words does not show any negative consequences on the model we train for our task.

Removal of stop words definitely reduces the dataset size and thus reduces the training time due to the fewer number of tokens involved in the training.

What is stemming:

Stemming is the process of removing prefixes or suffixes from words to obtain their base form, known as the stem. For instance, words like “running,” “runner,” and “runs” share the same root “run.” Stemming helps consolidate words with similar meanings and reduces inflected words to a common form, aiding in tasks like text classification, sentiment analysis, and search engines.

Common Stemming Technique:

Snowball Stemmer: Also known as the Porter2 Stemmer, the Snowball Stemmer is an extension of the Porter algorithm with support for multiple languages. It employs a more systematic approach and can handle stemming tasks in languages beyond English, including French, German, and in our case Arabic.

Functions used to preprocess data

These functions serve as integral components of our data preprocessing pipeline, designed to clean and standardize textual data for subsequent analysis. By employing a combination of techniques such as removing non-Arabic words, eliminating punctuation, tokenizing text, removing stopwords, and applying stemming, we ensure that our data is refined and optimized for further analysis

# Function to clean and preprocess text without Stemming

def clean_text(text):

# Remove non-Arabic words

text = re.sub(r'[^\u0600-\u06FF\s]', '', text)

# Remove punctuation

text = re.sub(r'[^\w\s]', '', text)

# Tokenization

tokens = text.split()

# Remove stopwords

tokens = [word for word in tokens if word not in stopwords_darija]

# Join tokens back into text

clean_text = ' '.join(tokens)

return clean_text

# with stemming

def preprocess_text(text):

# Remove non-Arabic words

text = re.sub(r'[^\u0600-\u06FF\s]', '', text)

# Remove punctuation

text = re.sub(r'[^\w\s]', '', text)

# Tokenization

tokens = word_tokenize(text.lower())

# Remove stopwords

tokens = [token for token in tokens if token not in stopwords_darija]

# Stemming - using SnowballStemmer for Arabic languages

stemmer = SnowballStemmer('arabic')

tokens = [stemmer.stem(token) for token in tokens]

return ' '.join(tokens)

Finally, we concatenate the preprocessed tokens back into a single string, using ' '.join(tokens), and return the resulting cleaned and normalized text from the function. This consolidated representation of the preprocessed text serves as the foundation for subsequent analysis and modeling tasks, enabling researchers and practitioners to derive meaningful insights from Arabic text data with confidence and accuracy.

- Below is a visual representation of preprocessing execution, showcasing the execution of the preprocessing functions discussed above. This image provides a glimpse into the transformation of raw textual data into a refined and standardized format, illustrating the effectiveness of the cleaning, tokenization, and stemming processes in preparing the data for subsequent analysis.

In Summary:

The steps we've taken to prepare our text data are crucial for making sense of it in natural language processing. By cleaning, organizing, and simplifying the text through various techniques, we've made it more understandable and ready for analysis. This process helps us remove unnecessary information, standardize the text, and focus on what truly matters. With our data in better shape, we're now well-equipped to delve deeper into analysis tasks such as understanding sentiment, categorizing text, and finding relevant information. Overall, these preprocessing steps form a solid foundation for extracting valuable insights from text data, enhancing the accuracy and effectiveness of our analysis.

Unveiling Insights: Exploratory Data Analysis on Ramadan Comments in Moroccan Darija

As the digital sphere continues to evolve, social media platforms serve as a rich source of public opinion and sentiment. In this blog, we delve into the world of Ramadan comments, examining the chatter before and after Iftar across popular platforms like Facebook, Twitter, Hespress, and YouTube. Our focus lies on deciphering patterns, trends, and unique characteristics within the comments, all in the vibrant language of Moroccan Darija.

In this article, you will learn how to:

Conduct word frequency analysis to identify predominant themes in Ramadan comments.

Explore temporal trends, revealing higher activity before Iftar.

Analyze language distribution, with Arabic comments prevailing.

Apply topic modeling to categorize comments into distinct themes.

Perform time series analysis to understand comment dynamics before and after Iftar.

This simplified tutorial will guide you through each phase of EDA applications, with a special focus on interpreting and visualizing the results.

You can find the entire code in the notebook below:

Get ready :)

Introduction to EDA

Exploratory Data Analysis (EDA) serves as the cornerstone of data exploration, offering a systematic approach to uncovering patterns, trends, and insights within datasets. In this section, we delve into the theoretical underpinnings of EDA, laying the groundwork for our journey through the Ramadan comments dataset in Moroccan Darija.

Essence of EDA

At its core, EDA embodies a philosophy of curiosity and discovery, empowering data practitioners to glean meaningful insights from raw datasets. Unlike formal statistical methods, which often require predefined hypotheses, EDA embraces a more flexible and intuitive approach, allowing analysts to let the data speak for itself.

Key Principles of EDA:

Visualization: Visualization lies at the heart of EDA, enabling analysts to transform raw data into insightful plots, charts, and graphs. By visually inspecting the distribution, relationships, and anomalies within the data, analysts can uncover hidden patterns and outliers that may elude traditional statistical methods.

Descriptive Statistics: Descriptive statistics provide a snapshot of the dataset's central tendencies, variability, and distribution. Metrics such as mean, median, standard deviation, and percentiles offer valuable insights into the shape and characteristics of the data, guiding subsequent analysis and interpretation.

Data Cleaning and Preprocessing: Before embarking on exploratory analysis, it is essential to ensure the cleanliness and integrity of the dataset. Data cleaning involves identifying and addressing missing values, outliers, and inconsistencies that may distort the analysis. Additionally, preprocessing steps such as normalization and transformation may be employed to enhance the quality and interpretability of the data.

Pattern Recognition: EDA involves the systematic identification of patterns, trends, and relationships within the dataset. By applying statistical techniques such as correlation analysis, clustering, and dimensionality reduction, analysts can uncover meaningful structures and associations that underlie the data.

Iteration and Iterative Refinement: EDA is an iterative process, wherein analysts continuously refine their analysis based on emerging insights and hypotheses. By iteratively exploring, visualizing, and interpreting the data, analysts can gradually refine their understanding of the dataset and extract deeper insights.

Understanding the Dataset

Before embarking on our exploratory journey, let's grasp the essence of our dataset. It comprises comments captured during Ramadan, spanning the moments preceding and following Iftar, the evening meal that breaks the fast. These comments emanate from diverse sources, reflecting the sentiments, emotions, and discussions prevalent during this sacred time.

Exploring Patterns and Trends

Word Frequency Analysis

Word Frequency Analysis is a powerful technique used to extract meaningful insights from text data by quantifying the frequency of occurrence of individual words or phrases within a corpus. In this section, we apply Word Frequency Analysis to our Ramadan comments dataset in Moroccan Darija, aiming to uncover the most prevalent terms and themes within the discourse.

def display_frequency_plot(df, column, stopwords):

reshaped_text = " ".join(arabic_reshaper.reshape(t) for t in df[column].dropna())

plt.figure(figsize=(20, 10))

counts = Counter(reshaped_text.split())

counts = {get_display(k): v for k, v in counts.items() if k not in stopwords}

counts = dict(sorted(counts.items(), key=lambda x: x[1], reverse=True)[:20])

palette = sns.color_palette("crest_r", n_colors=len(counts))

palette = dict(zip(counts.keys(), palette))

sns.barplot(y=list(counts.keys()), x=list(counts.values()), palette=palette)

plt.title("Frequency Plot")

plt.show()

Interpretation of Results:

The Word Frequency Analysis reveals that the most frequent words in our dataset are predominantly religious, which can be attributed to the sacred nature of Ramadan. Terms like "الله" (Allah), "رمضان" (Ramadan), and "اللهم" (O Allah) dominate the discourse, reflecting the deep significance of faith and spirituality during this holy month. This observation underscores the cultural and societal norms surrounding Ramadan, where discussions often revolve around religious observance and spiritual reflection, shaping the digital discourse in meaningful ways.

Temporal Trends

In the realm of digital communication, understanding temporal patterns is crucial for deciphering trends and behaviors within online communities. In the context of Ramadan, a period marked by spiritual reflection, communal gatherings, and fasting, the temporal dynamics of online engagement hold particular significance.

Let's analyse first the temporal distribution of comments reveals interesting patterns, particularly in terms of peak activity during specific hours of the day.

data['Hour'] = data['Date'].dt.hour

commentaires_par_heure = data.groupby('Hour').size()

plt.figure(figsize=(10, 6))

commentaires_par_heure.plot(kind='bar', color='blue')

plt.title('Nombre de commentaires par heure de la journée')

plt.xlabel('Heure de la journée')

plt.ylabel('Nombre de commentaires')

plt.xticks(rotation=0)

plt.grid(axis='y', linestyle='--', alpha=0.7)

plt.tight_layout()

plt.show()

These findings highlight distinct peaks in commenting activity throughout the day, with the afternoon hours exhibiting the highest levels of engagement. Hour 15:00 emerges as the period of greatest activity, suggesting a concentration of discussions and interactions during this time frame. Conversely, late evening hours, such as 22:00 and 21:00, also witness significant participation, albeit to a slightly lesser extent.

Time Series Analysis

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

data['Date'] = pd.to_datetime(data['Date'], format='%Y-%m-%d %H:%M:%S', errors='coerce')

data.dropna(subset=['Date'], inplace=True)

def time_series_analysis_by_source():

fig, axes = plt.subplots(len(data['source'].unique()), 2, figsize=(15, 6 * len(data['source'].unique())))

for i, source in enumerate(data['source'].unique()):

source_data = data[data['source'] == source]

source_data['Part_of_Day'] = pd.cut(source_data['Date'].dt.hour, bins=[0, 6, 12, 18, 24],

labels=['Night', 'Morning', 'Afternoon', 'Evening'])

sns.countplot(x='Part_of_Day', data=source_data, ax=axes[i][0])

axes[i][0].set_title(f'Distribution of Comments by Part of the Day for {source}')

axes[i][0].set_xlabel('Part of the Day')

axes[i][0].set_ylabel('Number of Comments')

before_iftar = data[(data['Date'].dt.hour < 18) | ((data['Date'].dt.hour == 18) & (data['Date'].dt.minute < 30))].shape[0]

after_iftar = data[(data['Date'].dt.hour >= 18) | ((data['Date'].dt.hour == 18) & (data['Date'].dt.minute >= 30))].shape[0]

sns.barplot(x=['Before Iftar', 'After Iftar'], y=[before_iftar, after_iftar], ax=axes[i][1])

axes[i][1].set_title(f'Total Number of Comments before and after Iftar for {source}')

axes[i][1].set_xlabel('Part of the Day')

axes[i][1].set_ylabel('Total Number of Comments')

plt.tight_layout()

plt.show()

def time_series_analysis_by_topic():

fig, axes = plt.subplots(len(data['topic'].unique()), 2, figsize=(15, 6 * len(data['topic'].unique())))

for i, topic in enumerate(data['topic'].unique()):

topic_data = data[data['topic'] == topic]

topic_data['Part_of_Day'] = pd.cut(topic_data['Date'].dt.hour, bins=[0, 6, 12, 18, 24],

labels=['Night', 'Morning', 'Afternoon', 'Evening'])

sns.countplot(x='Part_of_Day', data=topic_data, ax=axes[i][0])

axes[i][0].set_title(f'Distribution of Comments by Part of the Day for Topic: {topic}')

axes[i][0].set_xlabel('Part of the Day')

axes[i][0].set_ylabel('Number of Comments')

before_iftar = data[(data['Date'].dt.hour < 18) | ((data['Date'].dt.hour == 18) & (data['Date'].dt.minute < 30))].shape[0]

after_iftar = data[(data['Date'].dt.hour >= 18) | ((data['Date'].dt.hour == 18) & (data['Date'].dt.minute >= 30))].shape[0]

sns.barplot(x=['Before Iftar', 'After Iftar'], y=[before_iftar, after_iftar], ax=axes[i][1])

axes[i][1].set_title(f'Total Number of Comments before and after Iftar for Topic: {topic}')

axes[i][1].set_xlabel('Part of the Day')

axes[i][1].set_ylabel('Total Number of Comments')

plt.tight_layout()

plt.show()

Increased Pre-Iftar Engagement: Before Iftar, there is a notable uptick in comment activity, indicating heightened engagement on social media platforms. This surge in activity can be attributed to various factors:

Anticipation and Preparation: Individuals actively discuss meal preparations, share recipes, and express excitement for the impending breaking of the fast. Additionally, conversations about Ramadan traditions and cultural practices contribute to the vibrant pre-Iftar discourse.

Real-Time Sharing: The period preceding Iftar witnesses a surge in real-time sharing of fasting experiences and spiritual reflections. Users turn to social media to express personal anecdotes, seek communal support, and engage in collective reflection on the significance of Ramadan.

Conversely, there is a discernible decrease in comment activity after Iftar. Several factors may underlie this decline:

Post-Iftar Social Engagement: Following the breaking of the fast, individuals prioritize spending time with family and friends, participating in communal prayers, and enjoying post-Iftar meals and social gatherings. Consequently, there is a natural diversion of attention away from social media platforms, leading to reduced comment volumes.

Dynamic Nature of Online Behavior: The fluctuation in online engagement before and after Iftar underscores the dynamic interplay between cultural traditions, religious observance, and digital interaction during Ramadan. These patterns highlight the evolving nature of user behavior and preferences within the context of this sacred month.

Language Distribution

Examining the distribution of languages within the dataset reveals a notable disparity between Arabic and French comments, with Arabic comments significantly outnumbering French comments. This observation underscores the predominance of Arabic as the primary language of communication and expression within the context of Ramadan discussions.

The overwhelming presence of Arabic comments reflects the cultural and linguistic dynamics inherent in conversations surrounding Ramadan, particularly in regions where Arabic is the predominant language of communication. Arabic serves as the medium through which individuals articulate their thoughts, sentiments, and reflections on the significance of Ramadan, fostering a sense of shared cultural identity and belonging among participants.

While French comments may represent a minority within the dataset, their presence underscores the linguistic diversity and multiculturalism inherent in online discourse surrounding Ramadan. These comments may originate from individuals with varying linguistic backgrounds, contributing to the richness and diversity of perspectives within the digital conversation.

Topic Modeling

Topic modeling is a powerful method for uncovering recurring themes and patterns within textual data. In our analysis of Ramadan comments, we use topic modeling to reveal the underlying topics prevalent in the discourse. By identifying these themes, we gain valuable insights into the diverse narratives and interests shaping conversations during this sacred month.

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.decomposition import LatentDirichletAllocation

# Convert comments to a list

comments_list = data['clean_comment'].tolist()

# Create a CountVectorizer to convert comments into a matrix of token counts

vectorizer = CountVectorizer(max_df=0.95, min_df=2, stop_words=None)

X = vectorizer.fit_transform(comments_list)

# Specify the number of topics

n_topics = 3

# Run LDA

lda_model = LatentDirichletAllocation(n_components=n_topics, max_iter=10, learning_method='online', random_state=42)

lda_Z = lda_model.fit_transform(X)

# Get the feature names (words)

feature_names = vectorizer.get_feature_names_out()

# Create a dictionary to store the top words for each topic

topics_dict = {}

n_top_words = 20

for topic_idx, topic in enumerate(lda_model.components_):

topic_name = f"Topic_{topic_idx}"

topic_words = [feature_names[i] for i in topic.argsort()[:-n_top_words - 1:-1]]

topics_dict[topic_name] = topic_words

# Print the topic names and their respective top words

for topic_name, topic_words in topics_dict.items():

print(f"{topic_name}: {', '.join(topic_words)}")

Utilizing topic modeling techniques, we have identified three distinct topics within the dataset:

Topic_0: This topic revolves around religious themes, with keywords such as "الله" (Allah), "رمضان" (Ramadan), and "اللهم" (O Allah) indicating discussions centered on faith, supplication, and spiritual reflection. Other terms like "فلسطين" (Palestine) suggest engagement with social and humanitarian issues within a religious context.

Topic_1: The keywords in this topic pertain to entertainment and media content, with terms like "مسلسل" (series), "فيلم" (film), and "حلقة" (episode) indicating discussions related to television shows, movies, and popular culture. This topic reflects a divergence from religious discourse, focusing instead on leisure and entertainment activities.

Topic_2: This topic encompasses a mix of religious and cultural references, with keywords such as "المغرب" (Morocco), "محمد" (Mohammed), and "صلى" (prayer) suggesting discussions related to Islamic teachings, Moroccan culture, and expressions of gratitude and blessings.

Extracting Top Bigrams for Each Topic Using CountVectorizer

from sklearn.feature_extraction.text import CountVectorizer

# Define a function to extract top bigrams for each topic

def extract_top_bigrams(topic):

# Filter comments based on the topic

topic_comments = data[data['topic'] == topic]['clean_comment'].tolist()

# Create a CountVectorizer to extract bigrams

vectorizer = CountVectorizer(ngram_range=(2, 5), max_features=10000)

X = vectorizer.fit_transform(topic_comments)

# Get feature names (bigrams)

feature_names = vectorizer.get_feature_names_out()

# Get counts of each bigram

bigram_counts = X.sum(axis=0)

# Create a dictionary to store bigrams and their counts

bigram_dict = {bigram: count for bigram, count in zip(feature_names, bigram_counts.A1)}

# Get the top 20 most common bigrams

top_20_bigrams = sorted(bigram_dict.items(), key=lambda x: x[1], reverse=True)[:20]

# Print header

print(f"{'='*40}\nTop 20 most common bigrams for {topic}:\n{'='*40}")

# Print the top 20 most common bigrams

for i, (bigram, count) in enumerate(top_20_bigrams, 1):

print(f"{i}. {bigram}: {count} occurrences")

# Iterate over topics and extract top bigrams for each topic

topics = data['topic'].unique()

for topic in topics:

extract_top_bigrams(topic)

The bigrams extracted for the Entertainment topic predominantly consist of phrases related to television shows, movies, and online content. Phrases like "احسن مسلسل" (best series), "مسلسل زوين" (nice series), and "دنيا بوطازوت" (Dounia Batma, a Moroccan singer and actress) indicate discussions about specific TV series and personalities. Additionally, expressions such as "شي لايكات" (some likes) and "لايكات نحسو" (likes we count) suggest engagement with social media metrics and audience interaction. The prevalence of laughter-related phrases like "ههههههههههه" (hahaha) underscores the informal and lighthearted nature of the discussions within this topic.

In contrast, the bigrams extracted for the Religion and Ramadan topic are predominantly religious expressions and blessings associated with Ramadan. Phrases like "تبارك الله" (blessings of Allah), "رمضان كريم" (blessed Ramadan), and "اللهم صل" (O Allah, bless) reflect the reverence and piety inherent in Ramadan-related discourse. Expressions such as "جزاكم الله" (may Allah reward you) and "ماشاء الله" (as Allah wills) convey expressions of gratitude and acknowledgment of divine blessings. The repetition of phrases like "الله الله الله" (Allah Allah Allah) emphasizes the centrality of God in the conversations, reflecting a deep spiritual connection and devotion among participants.

Comment Length Analysis

In exploring the dynamics of online discourse, descriptive statistics offer valuable insights into the characteristics and patterns of communication within different thematic categories. In this context, we analyze descriptive statistics for comments categorized under two distinct topics: Entertainment and Religion/Ramadan. By examining mean comment length, mean word count, and mean number of characters, we gain a deeper understanding of the communication styles and content preferences within each topic.

import matplotlib.pyplot as plt

import seaborn as sns

def length_analysis(topic, col):

topic_comments = data[data[col] == topic]['clean_comment']

comment_lengths = topic_comments.apply(lambda x: len(x))

word_counts = topic_comments.apply(lambda x: len(x.split()))

mean_length = comment_lengths.mean()

mean_word_count = word_counts.mean()

mean_characters = topic_comments.apply(len).mean()

# Print descriptive statistics

print(f"\n{'='*40}\nDescriptive Statistics for {topic}:\n{'='*40}")

print(f"Mean Comment Length: {mean_length:.2f} characters")

print(f"Mean Word Count: {mean_word_count:.2f}")

print(f"Mean Number of Characters: {mean_characters:.2f} characters")

# Create visualization

fig, axes = plt.subplots(1, 3, figsize=(15, 5))

sns.histplot(comment_lengths, kde=True, ax=axes[0])

axes[0].set_title('Comment Length Distribution')

axes[0].set_xlabel('Comment Length')

axes[0].set_ylabel('Frequency')

sns.histplot(word_counts, kde=True, ax=axes[1])

axes[1].set_title('Word Count Distribution')

axes[1].set_xlabel('Word Count')

axes[1].set_ylabel('Frequency')

sns.histplot(topic_comments.apply(len), kde=True, ax=axes[2])

axes[2].set_title('Character Count Distribution')

axes[2].set_xlabel('Number of Characters')

axes[2].set_ylabel('Frequency')

plt.tight_layout()

plt.show()

# Iterate over topics and perform length analysis for each topic

topics = data['topic'].unique()

for topic in topics:

length_analysis(topic, 'topic')

For comments categorized under the Entertainment topic, the descriptive statistics reveal a mean comment length of 44.70 characters. On average, each comment consists of approximately 7.72 words. The mean number of characters per comment aligns with the mean comment length, indicating a relatively concise style of communication within this topic. This suggests that discussions related to entertainment tend to be succinct and to the point, reflecting the informal and casual nature of the conversations.

In contrast, comments categorized under the Religion and Ramadan topic exhibit a longer mean comment length of 68.84 characters. On average, each comment in this category comprises approximately 11.53 words. The higher mean comment length and word count suggest a more elaborate and detailed style of expression within discussions related to religion and Ramadan. Participants in these discussions may engage in more in-depth reflections, prayers, and expressions of devotion, contributing to the longer and more nuanced comments observed in this category.

Analyzing Comment Length and Word Count by Source Platform

Facebook

Comments originating from Facebook have a mean length of 59.61 characters and a mean word count of 9.77. This suggests a moderately concise communication style, with comments typically comprising short sentences or phrases. The relatively lower mean comment length compared to other platforms may indicate a tendency for more succinct interactions on Facebook.

Twitter

Twitter comments exhibit a slightly longer mean length of 63.06 characters and a mean word count of 10.52. While still concise, Twitter users appear to engage in slightly more extensive communication compared to Facebook. This may be attributed to Twitter's character limit and the need for users to convey their message within a constrained space.

Hespress

In contrast, comments from Hespress display a significantly longer mean length of 156.21 characters and a mean word count of 24.26. These statistics indicate a more verbose and detailed communication style on Hespress, with users often expressing complex thoughts or opinions in their comments. The higher mean comment length suggests that discussions on Hespress may be more in-depth and comprehensive compared to other platforms.

YouTube

Comments on YouTube have a mean length of 54.66 characters and a mean word count of 9.32. Similar to Facebook, YouTube comments tend to be relatively concise, with users typically conveying their thoughts or reactions in short messages. The lower mean comment length may reflect the nature of interactions on YouTube, where comments are often brief and focused on immediate reactions to the content.

In conclusion, this comprehensive exploration of comment data during Ramadan sheds light on various aspects of online discourse during this sacred month. Through techniques such as word frequency analysis, temporal trend exploration, language distribution analysis, topic modeling, and time series analysis, we've gained valuable insights into the patterns, themes, and dynamics of comments before and after Iftar. This analysis not only enhances our understanding of online engagement during Ramadan but also underscores the importance of Exploratory Data Analysis (EDA) in uncovering meaningful insights from complex datasets. As we continue to delve deeper into data analysis, it's crucial to leverage these techniques to derive actionable insights that can inform decision-making and deepen our understanding of societal trends and behaviors.

Machine Learning and Deep Learning models

In our project, we harness the power of machine learning (ML) and deep learning (DL) to unlock valuable insights from Moroccan Darija comments and tweets. Our approach involves leveraging a variety of ML and DL models to tackle different aspects of the data analysis process.

Clustering Models for Topic Segmentation

To uncover underlying themes and topics within the dataset, we utilize clustering models. These models group similar comments and tweets together based on their content, allowing us to identify distinct topics of discussion within the Moroccan online community. By applying clustering techniques, we gain a deeper understanding of the diverse range of subjects that are relevant to Moroccan users.

Next Word Prediction Model

Another crucial aspect of our project is the development of a next word prediction model. This model predicts the most probable word to follow a given sequence of words, taking into account the context of the conversation. By accurately predicting the next word, we enhance the coherence and fluency of generated text, improving the overall quality of our analysis and insights.

Models Available on Hugging Face via the Link Below

DARIJABERT: Sentiment Prediction Model

One of the highlights of our project is the implementation of DARIJABERT, a specialized sentiment prediction model trained on Moroccan Darija data. Inspired by state-of-the-art language models like BERT, DARIJABERT excels in understanding and predicting sentiment in Moroccan Darija comments and tweets. By leveraging deep learning techniques, DARIJABERT enables us to accurately assess the sentiment expressed in online discussions, providing valuable insights into the attitudes and opinions of Moroccan users.

Conclusion

Through the application of ML and DL models, we are able to delve deep into the world of Moroccan online discourse, uncovering hidden patterns, sentiments, and topics of interest. These advanced techniques empower us to extract meaningful insights from vast amounts of data, ultimately enhancing our understanding of Moroccan culture, society, and online behavior.

Conclusion

As we conclude this chapter, we are reminded of the transformative potential of collaborative endeavors like those championed by the MDS community. By harnessing the power of data and technology, we not only gain insights into societal trends and cultural expressions but also pave the way for informed decision-making, community engagement, and positive social change.

As we look towards the future, let us continue to embrace the spirit of collaboration, curiosity, and inclusivity that defines the MDS community. Together, we will continue to explore, innovate, and inspire, shaping a brighter tomorrow through the lens of data science and community-driven initiatives.

Explore a preview of our project : https://moroccansentimentsanalysis.com